In recent years, the emergence of Large Language Models (LLMs) has brought about significant shifts in the daily routines of consumers. Individuals can now undertake a diverse range of tasks, such as retrieving information, composing text, and refining documents through these powerful language tools. This integration of LLMs into daily life has resulted in notable boosts in productivity, both at work and in personal endeavors.

However, it’s important to recognize that not all consumers have experienced these benefits equally. Indeed, a considerable number of people around the world who speak less common languages aren’t able to interact with LLMs, primarily due to the inadequacy of language models designed for these specific languages. With 7,000 languages currently spoken in the world, the largest multilingual LLMs have been trained using only fewer than a hundred languages, thus leaving many languages and people completely behind.

Supporting non-English languages requires high-quality, abundant data sources, which can be difficult to find and access. And not only do those models perform worse but it also has been reported by Brown University that they are more likely to give unethical responses thus making them more vulnerable to malicious attacks.

Why do we have underrepresented languages in LLMs?

The performance of LLMs tailored for Low Resource Languages (LRL) is hindered by several key challenges.

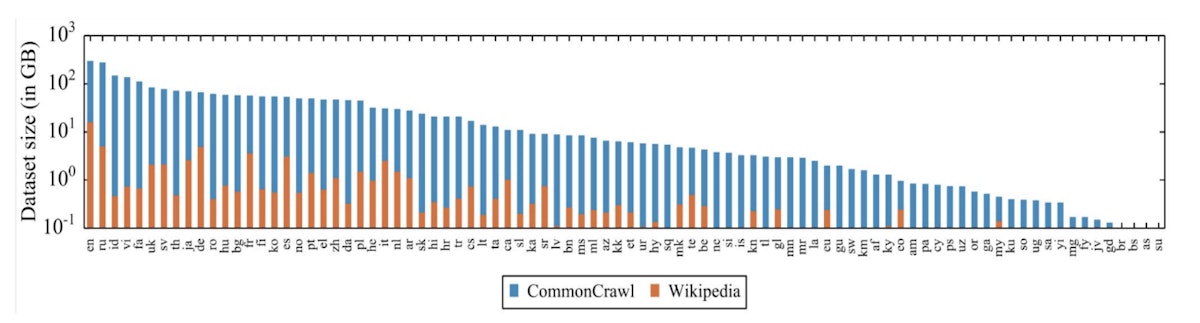

Firstly, the foundation models for many LLMs rely on data scraped from the internet, which often lacks comprehensive coverage of LRLs. The below graph shows a distribution of data across the internet divided into language groups. While more common languages have hundreds of GB of data potentially available for training models, the languages in the tail of the graph only have data available in the range of hundreds of megabytes.

The long tail of multilinguality, few high-resource languages, and many sparsely populated languages. – Image originally published in https://arxiv.org/pdf/1911.02116.pdf

This limitation is further magnified by the absence of fine-tuned instruction datasets for many LRLs. An instruction dataset consists of a question set paired with ideal answers and is a crucial part of LLM training – in this case, in specific languages. This is how the model learns to follow instructions, and without this asset, models are only capable of predicting the next word in the sequence instead of assisting humans with complex questions and problem-solving tasks.

The above is caused by the fact that LLMs are trained in sequential steps. The first step is to learn the language by reading a large amount of unannotated text which gives the model the ability to predict the next world in the sequence. The second step is tailoring this predictive behavior to follow specific instructions, such as answering questions, writing summaries, or extracting data. This is why fine-tuning datasets is of such importance, as their quality will further determine the ability of LLM to assist users with required tasks.

In the following section, we will present a method to create a high-quality dataset for Swahili that can be used to fine-tune the LLM for this language. The method can be applied to any low-resource language.

Innovative pipeline to gather data for LRLs

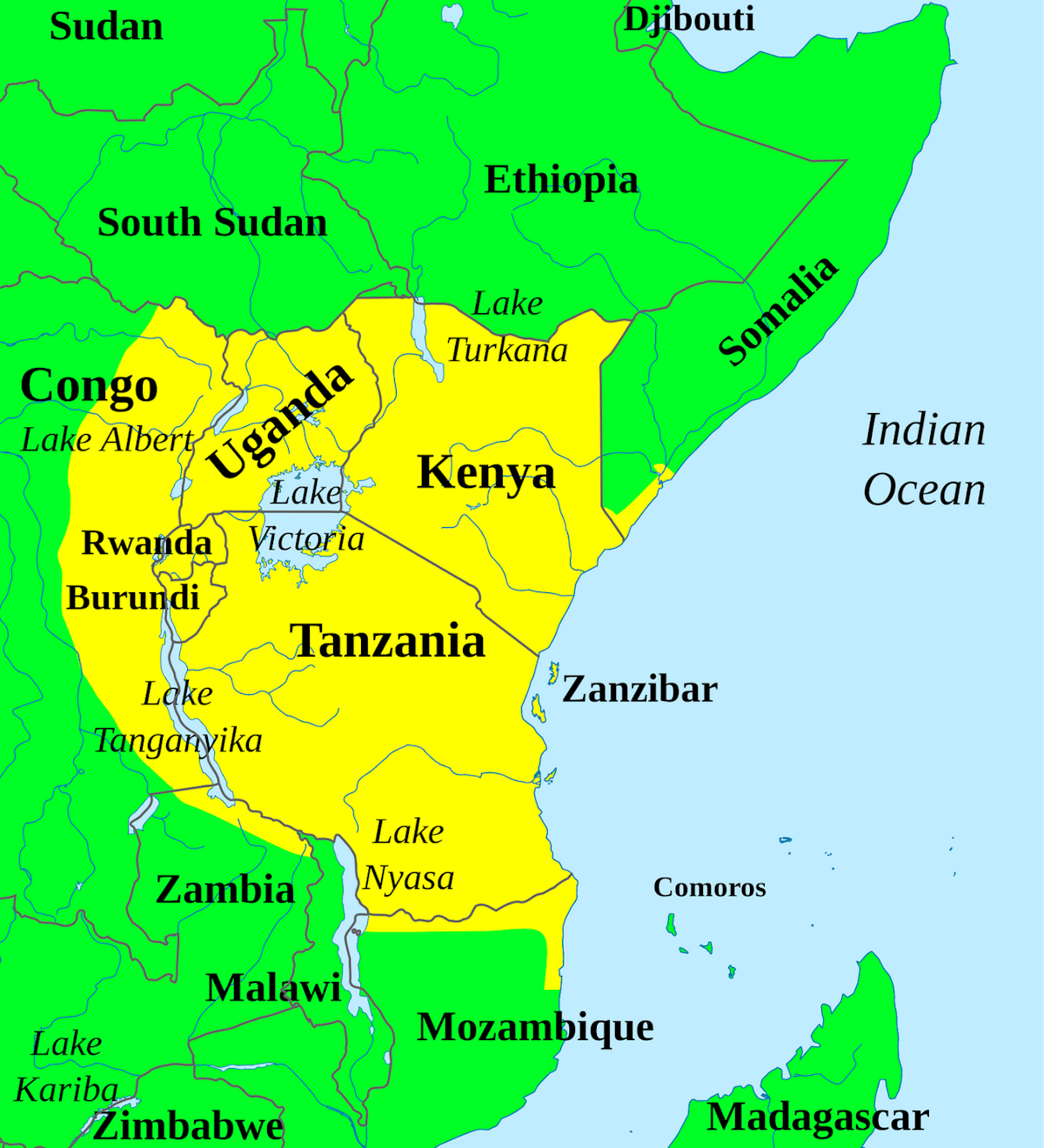

Swahili is a language spoken by over 200 million people across 14 different African countries and is the official national language in Tanzania, Kenya, Uganda, and the Democratic Republic of the Congo. It belongs to the group of low-resource languages and is an example of a language that does not have an out-of-the-box instruction dataset for LLM fine-tuning.

In general, three approaches exist to create a fine-tuning dataset for a language. The first one is the direct generation of a dataset by assessors, in this case, language experts, which requires developing both questions and ideal answers in the desired language. This can be challenging for the Swahili language because assessors need to be high-level experts and the process is generally expensive.

Another potential solution is taking an existing instruction dataset in English and translating it into Swahili. This could be done by translators who speak both Swahili and English but this can also be time and resource intensive. An automatic translator could be used, however, this typically results in insufficient or poor-quality results.

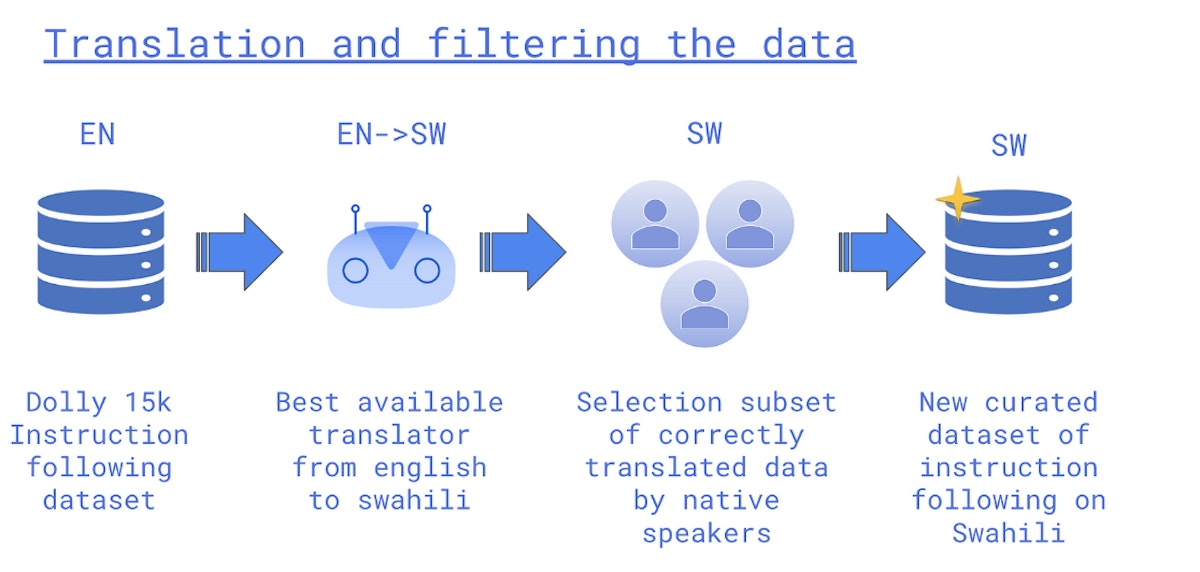

Another solution combines automated translation with human validation, offering a cost-efficient and scalable approach, which is critical to ensure LRL models are accurate, reflect local customs and norms, and are useful to the communities that will be using them. This method utilizes the best available automatic translator from Swahili to English and then asks native Swahili speakers to filter out examples that do not meet quality standards.

Toloka recently undertook a development project, where they created an 11,000 fine-tuning dataset for Swahili from the 15,000 original Dolly dataset. Each data point consisting of a prompt and an answer was translated from English to Swahili using automatic translation resulting initially in 15,000 question answers pairs in Swahili. This dataset was further reduced by asking native speakers to remove pairs with low quality thus leaving a fine-tuned Swahili dataset with 11,000 instances.

The dataset was then used to improve mT5, one of the top-performing multilingual language models for Swahili, which demonstrated significant performance enhancements for this language. The fine-tuned dataset boosted accuracy and f-score (a measure of predictive performance) for classification tasks, but more importantly, it significantly increased ROUGE, or Recall-Oriented Understudy for Gisting Evaluation, which is a set of metrics used for evaluating automatic summarization and machine translation software in NLP, and chrF++, Character n-gram F-score (chrF), in generative tasks where the model must respond to open questions. This experiment shows the potential for improving LLM performance in LRLs and therefore opens up a path for building truly multilingual models.

Creating a More Inclusive AI Ecosystem

As developers and organizations strive to create a more inclusive AI ecosystem, evaluation becomes even more critical, as does human involvement in training LLMs. Cohere’s recent launch of Aya, a language model that supports over one hundred languages, including Swahili and other LRLs, exemplifies this commitment. Addressing data scarcity and enhancing model performance for LRLs is an important step to building more inclusive and responsible AI systems that serve diverse linguistic communities worldwide.

This article was originally published by Magdalena Konkiewicz on HackerNoon.