Artificial Intelligence (AI) is all the rage in corporate circles these days. The CEO of Google has stated that AI will have more positive impact for humanity than the invention of fire or electricity. Investors are tripping over themselves to pump money into AI projects in a variety of areas including health care, construction, finance, and customer service.

If this all sounds vaguely familiar, we saw this kind of hype hypnotize the mainstream media when the Internet was still new and shiny. Now, of course, we’re wrestling with how such a promising technology devolved into a netherworld of hacking, hate speech, exploitation of personal data, “dark webs”, misinformation, and surveillance despite the glowing promises of techno-utopians.

The Hype Machine Returns

And yet here we are again, with an growing investment bubble being fed by the same sense of an inexorable future and a world utterly transformed by a technology that most people don’t really understand. Whether this trend will follow the same trajectory as the Internet is still uncertain. But it’s clear that this time around the preponderance of enthusiasm is largely coming from corporate entities that stand to benefit by using staggering levels of computing power for social engineering and economic gain.

An artifact of this enthusiasm was recently on full display in The Economist’s March 31 issue on AI, where an editorial gushed about the benefits using AI in the workplace. What are those benefits? Mostly they seem to involve the ability of employers to surveil and control their employees in unprecedented ways. The opinions rendered, at least, were forthright:

“Using AI, managers can gain extraordinary control over their employees. Amazon has patented a wristband that tracks the hand movements of warehouse workers and uses vibrations to nudge them into being more efficient. Workday, a software firm, crunches around 60 factors to predict which employees will leave. Humanyze, a startup, sells smart ID badges that can track employees around the office and reveal how well they interact with colleagues.”

While the editorial goes on to talk about some of the downsides of AI, the sing-song tone praising its benefits in the workplace seems disturbing, as is the lack of reaction to Amazon’s draconian approach to motivating workers. The article goes on to blithely inform us that “Surveillance at work is nothing new… but AI makes ubiquitous surveillance worthwhile, because every bit of data is potentially valuable.”

Worthwhile? Really? And what about that jolly phrase “ubiquitous surveillance”? Perhaps The Economist has not gotten the memo: the powerful and intrusive surveillance that has been slipped under the door by tech giants like Google and Facebook is getting a long overdue assessment by the media, the computer industry itself, and millions of exploited users ( in the case of Facebook, about two billion of them worldwide.)

The idea that social media’s surveillance model has become not only a threat to democracy but a huge social and cultural pothole has even forced latter day oligarchs like Mark Zuckerberg to testify in front of US Congress. Facebook has now lost almost 10% of its user base over a few months. Clearly, people are fed up with having their personal data tracked, collected, and manipulated without their full and knowing consent.

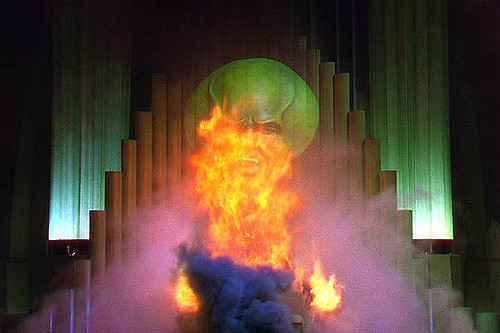

What’s Behind the Wizard’s Curtain?

In analyzing the new AI landscape, the trick is to find out what’s behind the Oz-like curtain. One of the companies The Economist references, Humanzye, buries its surveillance mission in an flurry of techspeak on an impenetrable web site. Viewing it, you couldn’t easily infer that what the company offers is “smart ID badges that can track employees around the office and reveal how well they interact with colleagues.” But the many references to behavioral science point to the most concerning aspect of the misapplication of big data and AI: social engineering and control.

The use of AI for social control is in many ways the elephant in the room. The Humanyze web site says that “there is an emerging field of cognitive science that analyzes individual and team behavior at scale….. computational social science. By collecting large amounts of data, scientists can uncover new behavioral patterns.”

But not just scientists: let’s not forget employers and governments. The Chinese government is already exploiting this potential with the use of a “social credit score” that ranks the societal worth of every citizen and accordingly doles out appropriate benefits or punishments. It’s also clear that the mainstream media often seems to miss one of the most important aspects of the Cambridge Analytica saga: it’s not just that that personal data was being collected but that psychographic profiles were being developed to deviously manipulate the opinions of Facebook users.

The great untold story of our time is that computer technology to date has concentrated unprecedented economic and political power in the hands of those who control it in its most powerful instantiations. AI technology will only exacerbate that trend and widen the gap between high-end and low-end users. Especially concerning are systems that purport to predict our behavior, which of course impart a kind of God-like omniscience to the AI technocrats using them to enhance the bottom line.

Unfortunately, there seems to be a lack of compelling cases for exactly how AI will improve the quality of life for average citizens, along with the same lack of specifics and semantic fog that paralleled the rise of the Internet. Will AI ennoble or enslave us? Based on the track record of how we have used the gift of computer and Internet technology to date, a strong dose of skepticism is in order.