So. Many. Articles. It’s hard keep track of the kind of things computers are capable of doing, or are supposedly going to be able to do by the year twenty-twenty-something.

But despite the AI hype there are a few ideas which will help keep one’s feet on the ground.

1) No one understands it

Seriously, no one. Not even the developers who create it. The whole point of machine learning, deep learning and AI, is you accept that the computer can do it better than you can. So you program it with a set of rules which should operate like boundaries, they give the computer a sphere within which to operate. But the computer then navigates said sphere itself, and we don’t necessarily get to find out how it’s doing it.

It’s for this reason that the Turing Test is so important to the process because we can’t look into the code and compare it to the way our neurons work in order to verify that it is indeed intelligent. So, we have to judge it on end results.

We have to have a conversation with a computer and see if we can tell the difference between a conversation with it and a human conversation. We are the test, the lab rats. That’s how far we’ve come as a species. Worried about being controlled by robots in the future? Fear not, it’s happened, we’ve already made ourselves implicitly servile before they’re even smart enough to control us. Asking a computer “can you manipulate me?” would be too simple.

2) It’s all much further away than anyone makes out

Pretty much every article about a thing that’s happened in the AI sector says something big and “newsworthy” is going to happen by 2020 or 2025. We’re in for two big years! But realistically this is a nonsense. Even driverless cars – up until now the most ravaged use for AI – are still facing legal and safety concerns.

Moore’s Law might apply to technology, but not to politics; groups of lawmakers in various countries will have to drop everything and hammer together a completely new set of laws for something as invasive to ordinary life as driverless cars. And though they have started, you know there will be plenty more arguments ahead (especially in the US political system), and even then driverless cars are still only one use for AI.

A lot of the uses at the moment are relatively narrow. We’re quite a way off having a general purpose AI bot that can learn anything and, crucially, pass the Turing Test. That would require building a computer that could mimic a human brain, but with way more power.

But wowing the consumer market with entrancing near-term promises sounds like a damn good way to get funding.

3) The first uses will probably be boring, industry stuff

It’s far more likely that self-navigating shipping tankers or advanced factory machinery are introduced by 2020 or 2025 than advanced consumer products. In these areas businesses have a pretty clear understanding of what they need and how it needs to be done.

Most businesses will have process charts and workflows and all sorts of well-defined and specific documentation. On the first hand this makes the technology easier to develop and on the second it makes life much easier for the politicians. There are just fewer “what ifs.”

When the AI sector creates products for the consumer market, it will have to take into account the whims and lunacy of people like you and I.

4) Elon Musk is worth listening to

Shocking, I know. But why? Well first of all let’s take all of his comments and interviews and his silly little spat with Mark Zuckerberg in context. He is one of those people who fits the description of being a brand in and of himself, and he’s bloody good at it. One part of his brand is AI: he has an AI company, he wants AI to do well, so at least part of the reason he spends so much time “in the media” – I believe that’s the expression – must be self-serving.

Read More: How Elon Musk’s companies would ensure human survival after a global cataclysm

In any case, he’s a pretty impressive individual. As articulated somewhat comprehensively – or perhaps gushingly – by Twitter CEO Dick Costolo in a CNBC interview, “I’m laughing because [Musk’s] ability to think cogently and thoughtfully about such a wide range of topics, while running these multiple companies, and seeming to be running them well is just, I mean, it makes you shake your head. It’s remarkable.”

Aside from all that he’s arguably, and paradoxically, the only visionary the venerated tech sector has left to offer. Facebook, Apple, Amazon, and Google have – in terms of groundbreaking innovations – produced the square root of naff all for years.

It could be said that pretty much the whole industry is still dining out on the success of the smartphone. Meanwhile, Musk is charging ahead with a space program, artificial intelligence, electric cars, and something called a “hyperloop.”

5) It’s not all lols and larks

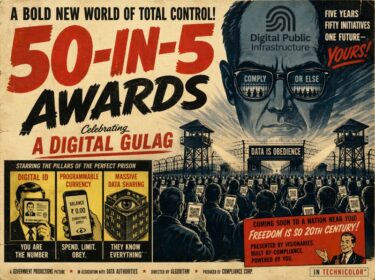

Back to this stuff about AI being humanity’s downfall. Musk pointed out that free market competition is driving AI development at an unhealthy rate, lawmakers need a chance to catch up. Going at its current lick, its development might be so quick that lawmakers may never get that chance before something fairly horrific happens.

Read More: AI human cyborgs are next on Elon Musk’s agenda with the launch of Neuralink

Despite the hype this was a genuine moment of responsibility over profits from Musk, and he should be applauded for saying it even if he didn’t make the ultimate sacrifice and promise to slow his own company’s development regardless of regulation. The problem is AI is so new, so different, that no current statute book contains any laws that would be of any use, even by extension.

When the godfather of sci-fi, Isaac Asimov, published Runaround in 1942 he took the first stab at trying to create some meaningful rules. They’re still know as The Three Laws of Robotics:

- A robot may not injure a human being or, through inaction, allow a human being to come to harm.

- A robot must obey the orders given it by human beings except where such orders would conflict with the First Law.

- A robot must protect its own existence as long as such protection does not conflict with the First or Second Laws.

You see what I mean about new? Sometime in the future we will co-exist with entities smarter, more capable, stronger and more organised than we are.

I’d go so far as to say that AI requires a moment akin to the creation of the US Constitution. A bunch of philosophers, academics, wisened politicians and probably Elon Musk need to lock themselves in a room, free of lobbyists, and spend a long few months having a massive argument.

Read More: Partnership on AI vs OpenAI: Consolidation of Power vs Open Source

They can then emerge with a resultant document, the contents of which they’re sure they’ve explored the boundaries of, and present it to the public.