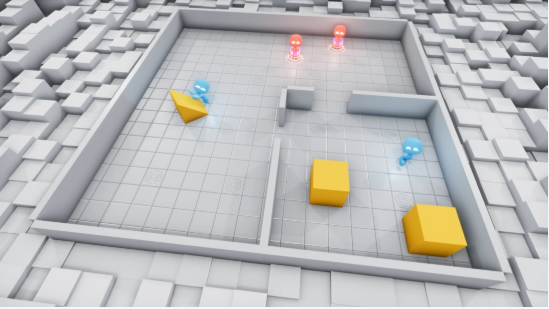

AI is finding unexpected ways to win a simulated game of hide and seek, even breaking the simulated laws of physics that were programmed by OpenAI developers.

We’ve observed AIs discovering complex tool use while competing in a simple game of hide-and-seek. They develop a series of six distinct strategies and counterstrategies, ultimately using tools in the environment to break our simulated physics: https://t.co/2lbOxo19rL

— OpenAI (@OpenAI) September 17, 2019

Taking what was available in its simulated environment, the AI began to exhibit “unexpected and surprising behaviors,” including “box surfing, where seekers learn to bring a box to a locked ramp in order to jump on top of the box and then ‘surf’ it to the hider’s shelter,” according to OpenAI.

And seekers learn that if they run at a wall with a ramp at the right angle, they can launch themselves upward. pic.twitter.com/SJv9SzctEp

— OpenAI (@OpenAI) September 17, 2019

What does all this mean? It backs up what we already know — that artificial intelligence can behave unexpectedly.

In this simulation, the AI found new and creative ways to win at hide and seek that its programmers never thought of. This is fascinating stuff!

“The self-supervised emergent complexity in this simple environment further suggests that multi-agent co-adaptation may one day produce extremely complex and intelligent behavior,” according to the OpenAI team.

Imagine if this were something as extreme as war. An unpredictable AI producing “complex and intelligent behavior” might bring about a swift end to any war, but at what cost?

Read More: ‘AI will represent a paradigm shift in warfare’: WEF predicts an Ender’s Game-like future

Now, imagine if this AI could compute at a quantum level, exploring every possible outcome simultaneously at speeds we couldn’t even fathom!

OpenAI postulated, “Building environments is not easy and it is quite often the case that agents find a way to exploit the environment you build or the physics engine in an unintended way.”

We haven’t reached a Terminator scenario yet, but the technology exists. Luckily, our researchers understand that they can’t predict nor control what the AI does, and so there are countless research programs looking to solve this, and the discussion of ethics is a hot topic.

Read More: Keeping Prometheus out of machine learning systems

In fact, “OpenAI’s mission is to ensure that artificial general intelligence benefits all of humanity.”

Right now, it’s incredible to see how AI is behaving in simulated environments. The challenge is to control that in a real-world environment.

OpenAI concluded, “These results inspire confidence that in a more open-ended and diverse environment, multi-agent dynamics could lead to extremely complex and human-relevant behavior.”