Developers and researchers of facial recognition technology, such as the multinational tech company IBM, have the power to incite discussions over its use in law enforcement, as well as its regulation.

In a letter he sent to US Congress on June 8, IBM CEO Arvind Krishna outlined his intentions to cease offering, researching or developing its own facial recognition technology “in pursuit of justice and racial equality.”

Alongside this, Krishna outlined his company’s interest in working with Congress on police reform — specifically, holding law enforcement accountable for misconduct — and broadening skills and education opportunities for communities of color.

“IBM firmly opposes and will not condone uses of any technology, including facial recognition technology offered by other vendors, for mass surveillance, racial profiling, violations of basic human rights and freedoms,” Krishna wrote.

“Vendors and users of Al systems have a shared responsibility to ensure that Al is tested for bias, particularity when used in law enforcement, and that such bias testing is audited and reported,” he stressed.

“We believe now is the time to begin a national dialogue on whether and how facial recognition technology should be employed by domestic law enforcement agencies.”

As IBMers, we stand with the Black Community and want to empower and ensure racial equality. pic.twitter.com/twfLDA99ao

— IBM (@IBM) June 2, 2020

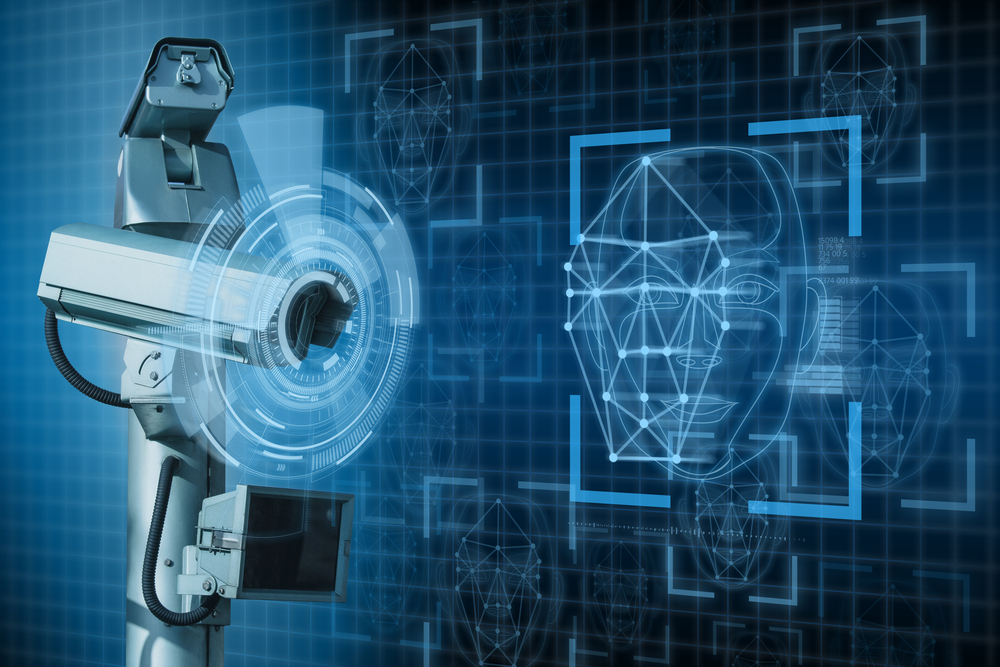

Although the first facial recognition technology was developed in the 1960s, it began to advance after 2012, when AI algorithms began to outpace human experts, a 2018 study published by PNAS (Proceedings of the National Academy of Sciences of the United States of America) showed.

The technology works by using AI-powered biometrics to map facial features from a photograph or video, and then comparing them with a database of known faces to find a match.

Racial bias

However, in 2018, researchers began to publish studies addressing how machine learning algorithms can discriminate based on race and gender, such as ‘Gender Shades,’ which showed that Black and brown females were the most misclassified group, the research showed, with error rates of up to 34.7%.

The same year, a report by the ACLU (American Civil Liberties Union) revealed that Amazon’s facial recognition technology, Rekognition, had falsely identified 28 members of Congress as people who had been arrested for a crime. These false matches disproportionately affected people of color.

In December 2019, a NIST (National Institute of Standards and Technology) study evaluated 189 software algorithms from 99 different developers, which the institute deemed to represent the majority of the facial recognition software industry.

The results indicated that across the board, false positive matches (where the software wrongly considers photos of two different individuals to show the same person) affected Asian and African American communities at a higher rate — between 10 and 100 times more — than Caucasians.

False positive matches affected Asian and African American communities at a higher rate — between 10 and 100 times more — than Caucasians.

The reason?

The data sets upon which these algorithms are trained were found to be overwhelmingly composed of lighter-skinned subjects, who in some cases hold up to an 86.2% majority, studies calculate.

The result?

The same study concluded that underrepresented demographic groups in benchmark datasets can be subjected to frequent targeting by a law enforcement system that is more likely to stop them, and therefore subject them to facial recognition searches.

Facial recognition systems therefore seem to be least effective for the populations they are most likely to affect.

Law enforcement and facial recognition technology

Although police departments in San Francisco and Oakland, California, and Somerville, Massachusetts are banned from using facial recognition technology, it is used by law enforcement in cities such as New York, Los Angeles, Florida and Chicago, as well as by the FBI in 16 states.

A 2016 study published by the Center on Privacy and Technology at Georgetown Law indicates that half of American adults — over 117 million people — are in a law enforcement face recognition network.

As long as police departments continue to use this technology to enforce the law, the false positive matches it perpetuates — which disproportionately affect people of color — could lead to false arrests, claims MIT media lab digital activist and co-author of the 2018 ‘Gender Shades’ study Joy Buolamwini in a letter to the Algorithmic Justice League.

And for people of color, being in police custody is dangerous. Unarmed black men, for example, were killed by police at 5 times the rate of unarmed whites in 2015, data from the Mapping Police Violence database shows.

Unarmed black men were killed by police at 5 times the rate of unarmed whites in 2015.

The recent murder of George Floyd, a 46-year-old black man, at the hands of a police officer in Minneapolis was a stark reminder of this for the world.

Performative allyship

Although Floyd was not the first and will not be the last black man to die in police custody, his murder sparked an outpour of declarations by individuals, brands, politicians and organizations over the world in support of the fight against racial injustice.

Brands such as Nike and L’Oréal published Instagram posts on #BlackoutTuesday, a social media movement that took place on June 2 to protest against racial inequality. They declared their commitment to fighting systemic racism and encouraged their customers to do the same, despite having not actively prioritized diversity and inclusion in the past.

L’Oréal, in particular, received accusations of hypocrisy and performative allyship after pledging to “stand in support of the Black community” despite, at the time, not having publicly apologized to black trans model and activist Munroe Bergdorf, after dropping her from a 2017 campaign after speaking out publicly against racism and white supremacy.

A week later, the cosmetics company issued a public apology to Bergdorf and invited her to participate on the UK Diversity and Inclusion Advisory Board.

Major tech companies, such as Amazon, Google, Microsoft and Intel also aligned themselves with the fight against racial injustice and participated in the #BlackoutTuesday movement.

— Amazon (@amazon) May 31, 2020

Yet these companies all either use or develop facial recognition technology.

Intel, for example, uses the technology to help identify high risk individuals who enter its offices on a daily basis.

Amazon’s own image and video analysis service, Rekognition, “help[s] identify objects, people, text, scenes, and activities in images and videos, as well as detect any inappropriate content.”

The company also sells the service to law enforcement agencies in the US, which academics, human and civil rights groups, and the company’s own workers and shareholders have campaigned against.

Update June 10, 5:09PM COT: Amazon came out with a statement on the same day of this article’s publication stating:

We’re implementing a one-year moratorium on police use of Amazon’s facial recognition technology. We will continue to allow organizations like Thorn, the International Center for Missing and Exploited Children, and Marinus Analytics to use Amazon Rekognition to help rescue human trafficking victims and reunite missing children with their families.

We’ve advocated that governments should put in place stronger regulations to govern the ethical use of facial recognition technology, and in recent days, Congress appears ready to take on this challenge. We hope this one-year moratorium might give Congress enough time to implement appropriate rules, and we stand ready to help if requested.

Although Microsoft refuses to sell its facial recognition technology to law enforcement agencies, continues to develop ‘Face,’ an AI service that analyzes faces in images to guarantee a “highly secure user experience.”

And Google, despite recognizing the need to fairly regulate the technology, continues to develop Face Match Technology, which uses front-facing cameras on laptops and computers to scan user profiles, for “convenience” and in order to provide “personalized information.”

While facial recognition technology continues to show racial bias, this begs the question of whether these tech companies should be accused of the same hypocrisy as brands like L’Oréal.

IBM’s decision to abandon facial recognition technology is an example of how to stimulate debate over whether the tech should be used in a law enforcement system which discriminates against people of color.

And although abandoning facial recognition technology might not be the answer for all tech companies that develop and use it — regardless of the capacity in which they do so — they have the power to also incite these same discussions over its regulation and make sure it is tested on accurate data sets before it is released.

Until then, their allyship in the fight for racial justice will continue to be performative.

Shareholders tell Amazon to stop selling Rekognition facial recognition tech to govt