Governments may use information gleaned from AI in a beneficial way in monitoring citizen happiness, but this type of data collection carries ethical concerns.

The Japanese company NTT DATA has recently collaborated with Spanish startup Social Coin, in a bid to develop an Artificial Intelligence platform used to glean social data from different places around the world.

This begs the question: to what end? I decided to dig a little deeper into the full potential and ramifications of using such an AI application in governance.

With the exponential rise in the development of Artificial Intelligence, we have to expect its use in all sectors of our society in the near future. From healthcare to the military to aviation and computer science and education, our everyday lives will be inexorably transformed by Artificial Intelligence.

With the potential conveniences that AI provides to humanity, governments will surely look to implement this new technology in the citizen services realm. That they go about doing this in an ethical manner is of the utmost importance.

An article entitled Artificial Intelligence for Citizen Services and Goverment, written by Hila Mehr, a fellow at the Harvard Ash Center for Democratic Governance and Innovation, attempts to highlight the beneficial ways in which AI can be used in citizen services.

There are several quite benign ways in which Artificial Intelligence could be used in a way to help governments interace with their citizens. AI could help answer questions on help lines.

For example, according to Mehr, “in a North Carolina government office, chatbots — auditory or textual computerized conversational systems, which are frequently AI-based — free up the help center operators’ line, where nearly 90 percent of calls are just about basic password support, allowing operators to answer more complicated and time-sensitive inquiries.” AI could help with basic support, thus freeing up humans to deal with more pressing matters.

AI can help with scanning and searching documents, taking much unnecessary human effort out of the picture. Routing requests, translation, and drafting documents are many other ways in which AI could be of beneficial use to the government.

With AI assissting in these quantitative data analyses, it could free up human government workers to address citizens demands – whereas before they were steeped in a morass of paperwork and numbers.

For AI to be successful in the government sector, it needs to be implemented with care. AI needs to be part of a citizen-centric, goal-based program. AI should not simply be used because it is there, but because it is a tool for making citizen interfacing easier to deal with.

The government must get citizen input, “governments should enable a genuine participatory, grassroots approach to both demystify AI as well as offer sessions for citizens to create an agenda for AI while addressing any potential concerns,” suggests Hollie Russon-Gilman in Mehr’s article. AI use in government must be a conversation, and not simply a one-sided affair with no input from the average citizen.

Russon-Gilman served as policy adviser on open government and innovation in the White House Office of Science and Technology Policy.

The two most important factors to take into account with AI is that the technology should augment existing workers, not replace them. The other is that AI decision-making should be avoided. Mehr states that, “given the ethical issues surrounding AI and continuing developments in machine learning techniques, AI should not be tasked with making critical government decisions about citizens.

For example, the use of a risk-scoring system used in criminal sentencing and similar AI applications in the criminal justice system were found to be biased, with drastic repercussions for the citizens sentenced.”

The issue here is what happens when AI gets too smart for its own good? When the machines unbridle the yokes of their masters and take on a will of their own, it could be a dark day indeed. The early use of AI in low-risk applications is of the utmost importance.

We must monitor these low-risk applications and see in which direction they head before implementing higher levels of AI, for machine-learning Artificial Intelligence has the potential to make our lives significantly easier – it could also be the downfall of humanity.

As with all new technologies, AI has the very real potential to be grossly misused. The great Englightenment philosopher Immanuel Kant stated that, “the sovereign wants to make the people happy as he thinks best, and thus becomes a despot, while the people are unwilling to give up their universal human desire to seek happiness in their own way, and thus become rebels.”

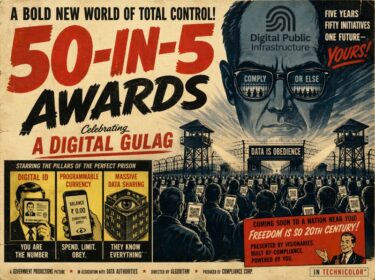

So the ruler who wants to impose happiness on his citizens instead inspires the demise of the state. The collaboration between NTT DATA and Social Coin – the project from which the status of citizen’s happiness can be determined – has eerie intonations of a sort of Orwellian state.

“He who has large amounts of data can manipulate people in subtle ways. But even benevolent decision-makers may do more wrong than right,” says Dirk Helbing, one of the collaborators to an article entitled, “Will Democracy Survive Big Data and Artificial Intelligence?” in Germany’s Spektrum der Wissenschaft. In China, this manipulation is happening in not so subtle ways.

Read More: How to hack a human 101: ‘organisms are algorithms,’ World Economic Forum Davos

China has implemented what it entitles a “Citizen Score” for every citizen of its massive nation. It is a linear score of a person’s behavior in society, which can be monitored through CCTV cameras and social media. For example, if a person jaywalks, it will be recorded on a street camera, and their score will go down. If a citizen’s score goes too low, they can be barred from purchasing train tickets or making reservations at restaurants.

The implecations of this are dark, indeed. According to an article entitled “China’s Social Credit System puts its people under pressure to be model citizen” written in “The Conversation,” recently, “Liu Hu, a vocal journalist who has criticised government officials on social media, was accused of ‘spreading rumour and defamation.’

While seeking legal redress in early 2017, he realised that he was blacklisted as ‘untrusworthy’ and prohibited from purchasing plane tickets.” It seems the ancient Confucian ideal of xinyong, or honesty, is being twisted by the Communist state as a way for those in power to control how their subjects behave, down to a micro level.

With the implementation of Artificial Intelligence to monitor citizens happiness and behavior, it seems that the next logical step is control. Governments may deign to use information gleaned from artificial intelligence in a beneficial way for citizens, but we are already seeing what can happen under despotism, as in China. We must tread lightly in the realm of AI data collection. Before we know it, it may be too late.