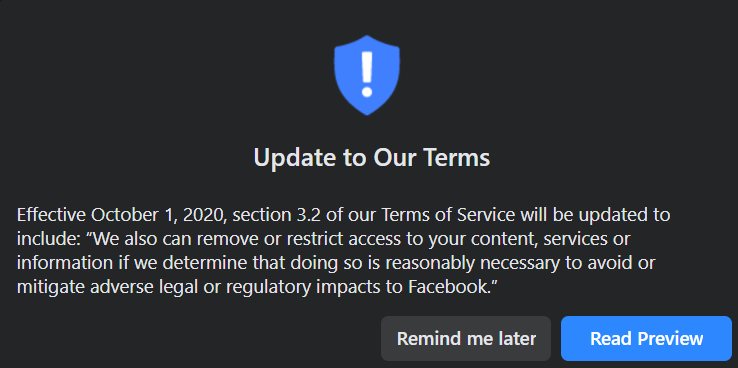

Facebook will start removing user content that could get the social media giant into legal or regulatory trouble in a move that appears to be in anticipation of legislative reform surrounding digital platforms and publishers.

Starting October 1, Facebook will update section 3.2 of its Terms of Service to include:

“We also can remove or restrict access to your content, services or information if we determine that doing so is reasonably necessary to avoid or mitigate adverse legal or regulatory impacts to Facebook”

The update to the terms of services looks to be a preventative measure to avoid some legal battles in the future, but Facebook still has a lot of adversaries in Washington DC and is constantly under attack by politicians from the left, right, and center about its policies and how they are enforced.

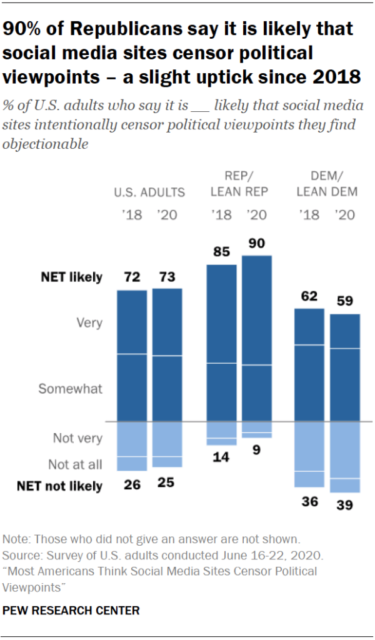

Source: PEW Research

From the left, Facebook is accused of propping up far-right hate groups and giving so-called conspiracy theorists a platform.

From the right, Facebook is accused of censoring conservative values.

I think both sides are partially correct but are too blinded by their own political agendas that they don’t see the overall picture.

While many of Facebook’s content moderators have been mostly left-leaning and Flat Earth groups still thrive on the platform, I believe Facebook will continue to do what’s in its own best interests as it always has, regardless of what’s best for society.

Right now, the social media giant is trying to play nice to both sides, but it can’t keep up this war of attrition forever.

And with the threat of Section 230 reform, it appears as though Facebook is bracing for major changes while covering its legal tracks with the update to its terms of service.

On June 17, US Attorney General William Barr issued a statement saying that Section 230 of the Communications Decency Act of 1996 was outdated and needed to be reformed to face the challenges surrounding today’s online content.

The attorney general and the DOJ outlined four reform proposals:

- Promoting Open Discourse and Greater Transparency

- Incentivizing Online Platforms to Address Illicit Content

- Clarifying Federal Government Enforcement Capabilities to Address Unlawful Content

- Promoting Competition

“Taken together, these reforms will ensure that Section 230 immunity incentivizes online platforms to be responsible actors,” said AG Barr.

“These reforms are targeted at platforms to make certain they are appropriately addressing illegal and exploitive content while continuing to preserve a vibrant, open, and competitive internet,” he added.

Barr’s remarks came one day after Google had become embroiled in yet another scandal, in which the tech giant was accused of threatening to demonetize media outlets Zero Hedge and The Federalist, allegedly at the behest of NBC, for not taking steps to remove inappropriate comments submitted by outside parties.

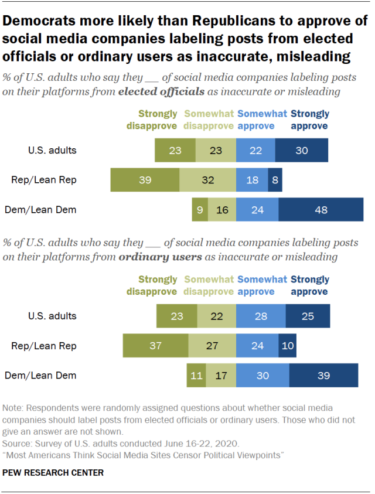

Source: PEW Research

Now Facebook is saying it will censor anything it deems “reasonably necessary to avoid or mitigate adverse legal or regulatory impacts to Facebook,” which looks a lot like it’s anticipating reforms to Section 230, like those that the DOJ proposed.

While Facebook’s new policy update looks to keep the company out of legal trouble concerning Section 230 reform, the social media giant is still facing an ongoing antitrust investigation.

And for all of its censorship efforts in trying to keep up the appearance of a socially-conscious platform, its core business model is antithetic to those very efforts.

What only a few on Capitol Hill realize is that Facebook’s entire business model is poison, and its algorithms amplify division and polarization by design.

In June, UC Berkeley professor and expert in digital forensics Dr. Hany Farid testified before the House Committee on Energy and Commerce that Facebook has a toxic business model that puts profit over the good of society and that its algorithms have been trained to encourage divisiveness and the amplification of misinformation.

“Mark Zuckerberg is hiding the fact that he knows that hate, lies, and divisiveness are good for business,” Farid testified.

“They didn’t set out to fuel misinformation and hate and divisiveness, but that’s what the algorithms learned.

“Mark Zuckerberg is hiding the fact that he knows that hate, lies, and divisiveness are good for business” — Dr. Hany Farid

What we are witnessing with the left and right accusing Facebook of either too much censorship or not enough of it, is a reflection of the polarity that the algorithms were designed to amplify.

However, I don’t like to place all the blame on a bogey man like Facebook. While the technology is powerful, we are individual human beings capable of rational thought, and we don’t have to add to the polarization if we so choose, but this takes tremendous will power.

Social media platforms like Facebook make it very difficult for users not to become polarized because that’s what the algorithms have learned.

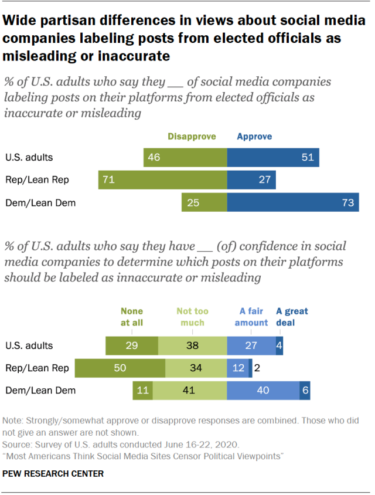

Source: PEW Research

Dr. Farid testified that “Algorithms have learned that the hateful, the divisive, the conspiratorial, the outrageous, and the novel keep us on the platforms longer, and since that is the driving factor for profit, that’s what the algorithms do.”

“The core poison here is the business model. The business model is that when you keep people on the platform, you profit more, and that is fundamentally at odds with our societal and democratic goals.”

The social media giant constantly says that it doesn’t benefit from hate, and Zuckerberg has repeatedly said he doesn’t want the platform to be the arbiters of truth, yet that’s exactly what they have become because Facebook controls the flow of information by deciding what gets censored or approved.

“When technology takes control of our information environment, it takes control of humanity” — Tristan Harris

Across the globe, billions of people use only a handful of platforms to receive all of their news and information, but free citizens can only make informed decisions based on the quality of information available to them.

When all the information is filtered through just a few corporations, the information becomes a tool for control.

According to former Google ethicist and current president of the Center for Humane Technology Tristan Harris, “When technology takes control of our information environment, it takes control of humanity.”

“Facebook and these services actually already run the world,” he added.

“Because if they run our information systems, they run our voting systems, they run whether people get vaccinated, they run whether there is violence in our streets, how can we keep our humanity at the center when this process is happening?”

With its dominance over the flow of information through search, news, and social media, big tech platforms have been creating an un-elected technocracy for years.

“Liberty must at all hazards be supported” — John Adams

On October 1, Facebook will begin cracking down with even more censorship to cover its legal behind, yet censorship destroys the very diversity of thought that it was meant to protect.

In the quest to combat fake news and misinformation, big tech companies and social media platforms have censored medical doctors, scientists, politicians, and entire news publications because of what they deemed to be potentially “hazardous” information.

John Adams declared way back in 1765 that “Liberty must at all hazards be supported,” but what happens if we apply that to today’s social media standards?

Once the wheels of censorship and information manipulation begin to spin, it’s very hard to put on the brakes, and liberty is sacrificed for the so-called health and safety of the people.

Facebook says it doesn’t benefit from hate, but its algorithms tell a different story: op-ed

Big tech CEOs give Congress the 5 Ds of Dodgeball on anti-competitive behavior

Are you really buying Facebook’s privacy-focused vision? Op-ed